Technically, Foundation Models In Gaming are readily available, and the business industry is only in the beginning phases of understanding their full capabilities. Various solutions have been built with real-time User Interface (UI) design methodologies to enhance User Experience (UX) and foster maximum business success — but the use cases are either not well known or are limited.

Fortunately, developers can easily access innovative models and microservices through cloud APIs today. They can explore how Artificial Intelligence (AI) can affect their games and scale their solutions to more customers and devices than ever. This is because AI technologies have a massive impact across industries, including media and entertainment, automotive, customer service, and more.

Large diffusion foundation models and prominent programming languages are becoming much more lightweight. Today, most game developers are looking for suitable ways to run them natively on various hardware power profiles, including PCs, consoles, and mobile devices. However, understanding the capabilities of foundation models and their potential applications can be challenging.

Especially for the gaming audiences unfamiliar with the technical terminology. By doing so, we can help make this exciting technology more accessible and understandable to a broader audience. Fortunately, this guideline article breaks down the capabilities of foundation models in gaming into simple terms. It provides examples of how they can be used to solve real-world problems.

Understand How Foundation Models In Gaming Empower The Gameplay Businesses

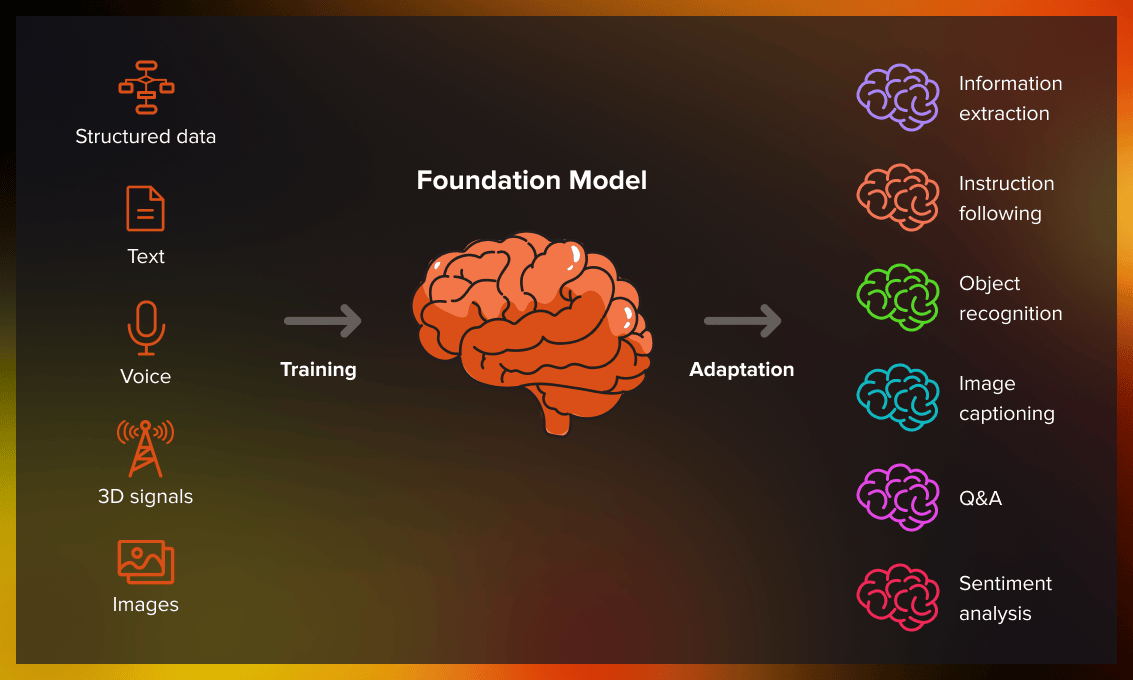

According to NVIDIA, Foundation Models In Gaming are neural networks trained on massive amounts of data — and then adapted to tackle various tasks. These foundation models can enable a range of cloud computing tasks, such as text, image, and audio generation. Over the last year, the foundation models have been widely increasing in popularity and use, with hundreds now available.

Foundation Models In Gaming are poised to help developers realize the future of this business industry. They have unlocked limitless possibilities, empowering designers and game developers to build higher-quality gaming experiences. For example, GPT-4 is a large multimodal model developed by OpenAI ChatGPT that can generate human-like text based on context and past conversations.

In other words, a foundation model is an AI neural network — trained on mountains of raw data, generally with unsupervised learning — that can be adapted to accomplish a broad range of tasks. Two essential concepts help define this umbrella category: Data gathering is more manageable, and opportunities are as wide as the horizon. Consider a foundation model such as the DALL-E 3.

Technically, DALL·E 3 is built natively on ChatGPT, which lets you use ChatGPT as a brainstorming partner and refiner your prompts. It can create realistic images and artwork from a description written in natural language. Powerful foundation models like NVIDIA NeMo and Edify Model in NVIDIA Picasso make it easy for gaming companies and developers to inject AI into their existing workflows.

Why Foundation Models In Gaming Are Special Tools For Game Developers

Since Stanford introduced the Foundation Models Concept in 2022, it has been one of the hottest trends in almost every industry. These models are the first to have penetrated the general public. A foundation model is a sizeable pre-trained machine learning topology. It can be further fine-tuned on a specific task and has achieved state-of-the-art performance on various game design tasks.

Through the help of Natural Language Processing (NLP) technologies, these foundation models are designed to learn patterns and language structures and understand game state changes. At the same time, they help in evolving and progressing alongside the player in the game. As NPCs become increasingly dynamic, real-time animation and audio that sync with their responses are essential tools.

Foundation Models are readily available, and the gaming industry is only beginning to understand their full capabilities. From creating lifelike characters that convey emotions to transforming simple text into captivating imagery, they are becoming essential in accelerating developer workflows while reducing overall costs. These powerful AI models have unlocked limitless possibilities.

In gaming, they have achieved state-of-the-art performance in various research benchmarks across various modalities, including text, images, speech, tabular data, protein sequences, organic molecules, and reinforcement learning. Additionally, since data is naturally multimodal in some domains (such as videos), multimodal models effectively combine relevant information about a domain.

Resource Reference: Learn More About What Makes Foundation Models So Special

They can easily and quickly adapt to tasks involving multiple modes. At all costs, AI researchers and game design engineers are most excited about their generalizability. Generalizability means that a well-trained foundation model can make accurate predictions. It can also generate coherent text/images based on data it has never seen before (without additional training or fine-tuning).

Today, transformer models, Large Language Models (LLMs), and other neural networks still being built are part of this new category. Like the prolific jazz trumpeter and composer, researchers have been generating AI models feverishly, exploring new architectures and use cases. As they focus on plowing new design grounds, they sometimes leave the job of categorizing their work to others.

Likewise, they empower designers and game developers to build higher-quality gaming experiences. Although various solutions have been built for real-time experiences, the gaming marketplace use cases are still limited. Fortunately, developers can easily access models and microservices through cloud APIs today. With that in mind, below are some more benefits of foundation models.

1. Intelligent Content Material Design

Developers use NVIDIA Riva to create expressive character voices using speech and translation AI. Designers are also tapping NVIDIA Audio2Face for AI-powered facial animations. Some top use cases are intelligent agents, AI-powered animation, and asset creation. For example, many creators today are exploring models for creating intelligent non-playable characters, or NPCs. Custom LLMs fine-tuned with specific games can generate human-like text, understand context, and respond to prompts coherently.

2. In Pre-Production And Production

Asset creation during game development’s pre-production and production phases can be time-consuming and expensive. The blending of AI algorithms and model architectures is homogenization—a trend that has helped form foundation models. Generative AI is an umbrella term for transformers, large language models, diffusion models, and other neural networks. It helps capture people’s imaginations because they can create text, images, music, software, and more. There are also potent emerging hubs and new AI features still under discovery. As well as many budding skills in foundation models crucial in asset and animation generation.

3. Unlimited Game Development Methods

Diffusion and large language models are becoming much more lightweight as developers look to run them natively on various hardware power profiles, including PCs, consoles, and mobile devices. The accuracy and quality of these models will only continue to improve as developers look to generate high-quality assets. In particular, those that need little to no touching up before being dropped into an AAA gaming experience. Multimodal Models show great promise in improving the animation of real-time characters. This is one of the most time-intensive and expensive processes of game development. These models may help make characters’ locomotion identical to real-life actors, infuse style and feel from various inputs, and ease the rigging process.

4. Meeting All The Design Environment Settings

Foundation Models will also be used in areas challenging for developers to overcome with traditional technology. For example, autonomous chatbots and cloud-based agents can help analyze and detect world space during game development, accelerating quality assurance processes. The rise of multimodal foundation models may help combine text, image, audio, and other inputs simultaneously. As well as enhance player interactions with intelligent NPCs and other game systems. Also, developers can use additional input types to improve creativity and enhance the quality of generated assets during production.

4. Limitless Revenue And Skills Development

Generative AI has the potential to yield trillions of dollars of economic value. With state-of-the-art diffusion models, developers can iterate more quickly. They can explore how AI can affect their games and scale their solutions to more customers and devices than ever. Developers can also free time on the most critical aspects of the content pipeline, such as developing higher-quality assets and iterating. The ability to fine-tune these models from a studio’s data repository ensures the outputs generated are similar in style.

Getting To Know How Foundation Models In Gaming Design Sectors Are Built

Foundation models generally learn from unlabeled datasets, saving the time and expense of manually describing each item in massive collections. Earlier neural networks were narrowly tuned for specific tasks. With some fine-tuning, foundation models can handle jobs from translating text to analyzing medical images. They are demonstrating “impressive behavior” while being deployed at scale.

Realistically, their foundations are large, complex models designed to be flexible and reusable across various domains and industries. These innovative foundation models work intelligently because of transfer learning and scale. They can be used as a base for AI systems performing multiple tasks. Organizations can easily and quickly use unlabeled data to create their foundation models.

Resources and time are necessary to train a base model alongside a certain level of expertise. Currently, many developers within the gaming industry are exploring off-the-shelf models. But they need custom solutions that fit their specific use cases. They need foundation models trained on commercially safe data and optimized for real-time performance — without excessive design costs.

AI has a massive impact across industries, including media and entertainment, automotive, customer service, etc. These advances pave the way for game developers to create more realistic and immersive in-game experiences. After training, the foundation model is evaluated on quality, diversity, and speed. It can be deployed for production once it passes the relevant tests and evaluation steps.

Some essential performance evaluation methods:

- Tools and frameworks that quantify how well the model predicts a sample of text

- Metrics that compare generated outputs with one or more references and measure the similarities between them

- Human evaluators who assess the quality of the generated output on various criteria

- Other crucial and robust performance evaluation methods also exist.

Lack of knowledge of custom requirements and difficulties in designing foundation models has slowed their adoption rate. However, innovation within the generative AI space is swift. Once major hurdles are addressed, developers of all sizes will use foundation models to gain new efficiencies in game development, accelerate content creation, and help create new gameplay experiences.

By all means, using the NVIDIA NeMo framework, organizations can quickly train, customize, and deploy generative AI models at scale. At the same time, using NVIDIA Picasso, teams can fine-tune pre-trained Edify models with their enterprise data. As a result, they can build custom products and services for generative AI images, videos, 3D assets, texture materials, and 360 HDRi. Learn more below:

Training a foundational model can be time-consuming, especially given the size of the model and the amount of data required. Fortunately, hardware like NVIDIA A100 or H100 Tensor Core GPUs and high-performance data systems like the NVIDIA DGX SuperPOD can accelerate training. For example, ChatGPT-3 was trained on over 1,000 NVIDIA A100 GPUs over about 34 days.

Exploring The Basic Foundation Models In Gaming That Are Already In Use

Generally speaking, hundreds of foundation models are now available. One paper catalogs and classifies over 50 major transformer models alone (resource reference). The Stanford group benchmarked 30 foundation models, noting the field is moving so fast they did not review some new and prominent ones. With time, these foundation models will keep getting more extensive and complex.

Over time, other notable organizations—primarily in cloud services—also use these foundation models. For example, Microsoft Azure worked with NVIDIA to implement a transformer for its Translator service solution. It helped disaster workers understand Haitian Creole while responding to a 7.0 earthquake. Microsoft announced enhancement plans to its web browser and search engine.

As such, Microsoft intends to achieve this with the help of ChatGPT tools and related innovations in its revolutionary course. Still, Google announced an Integration of Bard into its systems. Bard is an experimental conversational AI service with unique foundation models. It plans to plug many of its products into the power of its foundation models like LaMDA, PaLM, Imagen, and MusicLM.

Still, NLP Cloud, a member of the NVIDIA Inception Program, helps nurture cutting-edge startups. The NLP Cloud uses about 25 large language models in a commercial offering that serves airlines, pharmacies, and other users. Experts expect a growing share of the foundation models will be available on websites like Hugging Face’s model hub and other open-source application platforms.

The Artificial Intelligence Foundation Models For Gaming Business Sectors

A new NVIDIA NeMo Framework aims to let any business create its own billion- or trillion-parameter transformers to power custom chatbots, personal assistants, and other AI applications. It created the 530-billion parameter Megatron-Turing Natural Language Generation (MT-NLG) model that powers TJ, the Toy Jensen avatar that gave part of the keynote at NVIDIA GTC last year.

Foundation Models — connected to 3D platforms like NVIDIA Omniverse — will be vital to simplifying the Metaverse Game Development, the Virtual Reality (VR), and the 3D evolution of the Internet. These models will power applications and assets for entertainment and industrial users. Today, most factories and warehouses already apply foundation models inside digital twins.

In addition, they also utilize realistic simulations that help find more efficient work methods. At the same time, foundation models can ease the job of training autonomous vehicles and Collaborative Robots (Cobots) that assist humans on factory floors and logistics centers. Equally important, these models also help train autonomous vehicles by creating realistic environments like the one below.

Given that future, AI-driven systems will likely rely heavily on foundation models. One venture capital firm lists 33 use cases for innovative Generative AI, from ad generation to semantic search. Therefore, it is imperative that we, as a community, come together to develop more rigorous principles for foundation models and guidance for their responsible development and deployment.

Some Notable Challenges That Game Developers Must Address In The Future

Foundation Models have limitations that should be considered before being used in production. One risk is that they may not perform as well on niche or specific tasks not included in the pre-training data. Therefore, it may be necessary to fine-tune the models on data more relevant to the specific task to improve their performance. As such, their dataset should be as large and diverse as possible.

Too little or poor-quality data can lead to inaccuracies — sometimes called hallucinations — or cause finer details to go missing in generated outputs. Secondly, their dataset must be efficiently prepared. This includes cleaning the data, removing errors, and formatting it so the model can understand it. Notwithstanding, bias is a pervasive issue when preparing a model system dataset.

In that case, measuring, reducing, and tackling these inconsistencies and inaccuracies is essential for the gaming sector. Additionally, they may inadvertently perpetuate some biases present in the data used to train them — preventing and mitigating these biases is an active area of research. Luckily, there are best practices that can be used to reduce the impact of biases in pre-trained models.

From enhancing dialogue and generating 3D content to creating interactive gameplay, foundation models have opened up new opportunities for developers to forge the future of gaming experiences. However, there are still other new uses for foundation models with their unique challenges in applying them, which are emerging daily. Thus, understanding and managing all their risks is essential.

The most notable risks are as follows:

- amplifying bias implicit in the massive datasets used to train models,

- introducing inaccurate or misleading information in images or videos,

- violating intellectual property rights of existing works, etc.

During production and run-time, middleware, tools, and game developers can leverage pre-trained foundation models to help solve the issues. Resources and time are necessary to train a base model alongside a certain level of expertise. Currently, many developers within the gaming industry are exploring off-the-shelf models but need custom solutions that fit their specific use cases.

They need models trained on commercially safe data and optimized for real-time performance — without exorbitant deployment costs. The difficulty of meeting these requirements has slowed the adoption of foundation models. However, innovation within the generative AI space is swift once major hurdles are addressed by developers of all sizes — from startups to AAA design studios.

In Conclusion;

The potential for Foundation Models In Gaming business industries is fascinating — they offer countless unseen and new opportunities that have yet to be imagined. These opportunities will surely emerge — they’ll foster creative minds in transforming their ideas into tangible, compelling products in record-breaking time. Still, more research is essential to unravel their full potential.

For game developers, the advances in AI Technologies pave the way for creating more realistic and immersive in-game experiences. From creating lifelike characters that convey emotions to transforming simple text into captivating imagery, foundation models are becoming essential in accelerating developer workflows while reducing overall costs. These are powerful AI technologies in gaming.

Resource Reference: Exploring The Cloud Gaming Future | Its Technological Impact

Moving into the future, current ideas for safeguards include filtering prompts and their outputs, recalibrating models on the fly, and scrubbing massive datasets. As a community, these are the vital issues some field AI researchers and game business developers are plowing as they create the future. However, for these models to be genuinely widely deployed, we must invest a lot in safety.

Developers can use foundation models to gain new efficiencies in game development and accelerate content creation. Additionally, these models can help create utterly new gameplay experiences. Be that as it may, to discover and learn more about these foundation models, join this Discord community today to engage with experts and discuss all things Multimodal AI with professionals!

These type of educational foundation games help to learn lots of things without any effort.