When it comes to Site Ranking Factors, Linked Data empowers people that publish and use the information on the Web. It is a way to create a network of standards-based, machine-readable data across Web sites. Basically, it allows an application to start at one piece of Linked Data, and follow embedded links to other pieces of Linked Data that are hosted on different sites across the Web.

As an example, JSON-LD is a lightweight Linked Data format. Not to mention, it is easy for humans to read and write. In addition, JSON-LD is based on the already successful JSON format. Providing a way to help JSON data interoperate at Web-scale. It’s an ideal data format for programming environments.

As well as, REST Web services, and unstructured databases such as CouchDB and MongoDB. Among other site ranking factors, the JSON-LD Playground is a web-based JSON-LD viewer and debugger. If you are interested in learning JSON-LD, this tool will be of great help to you. Developers may also use the tool to debug, visualize, and share their JSON-LD markup.

What Are The Site Ranking Factors?

Generally speaking, people are always talking about various site ranking factors. And by now, from my previous Best SEO Tactics guide, you already know the secret ingredients to the magical algorithmic formula of Google.

And if you know them and find a way to please these factors, you’re well on your way to that coveted number one spot. Or so, as people seem to think! But, in general, chasing all these individual ranking factors is not a good tactic.

For one thing, focusing on building the best site is. And that’s why I thought it’d be a cool idea to play a game of “is-a-ranking-factor” in this exclusive post. Of course, with changing times, SEO ranking requirements keep changing too. And it can be hard to keep up with the latest developments. But if you want your site to get traffic, you have to be in the know.

How does Site Ranking Factors work?

When people want to find information, they type or say words related to what they’re looking for (those are called keywords).

But search engine rankings are not just about keywords; they’re also about the quality of information.

According to Google’s own search quality ratings, when it indexes the main content of each page, it checks for various factors.

Google checks for factors like;

- The purpose of the page

- Expertise, authority, and trustworthiness – not just from the site and the page content, but expertise from the individual creator of the content too.

- Content quality and amount

- Website info and info about the content creator

- Website reputation and content creator reputation

These go into its ranking algorithm and help to determine SEO ranking.

Based on the rating guidelines above, Google shows searchers the most relevant, high-quality results related to what they’re looking for. The most relevant are shown first, with the rest being shown over successive pages.

One of the goals of addressing SEO ranking factors is to let Google know when your pages on your site are relevant to particular search queries, so people will click the links and visit your site.

But, let’s be clear, though: there’s never a guarantee of a page one or #1 rank, and with SEO guidelines changing all the time, search engine rankings change with them.

Which are the Best SEO Ranking Factors?

First of all, Site Ranking factors can relate to a website’s content, technical implementation, user signals, and backlinks profile. Or even any other features the search engine considers relevant.

And, therefore, understanding ranking factors is a prerequisite for effective search engine optimization.

As an example, every year Searchmetrics analyze the top 30 search results for 10,000 keywords. That’s nearly 300,000 URLs – in their Site Ranking Factors study. Where they analyze them using a number of factors that they expand from year to year. Like the number of backlinks, text length, and keyword or content features.

But, the underlying question is always; What differentiates pages that have climbed the rankings? As opposed to those who are placed further back in the SERPs? Do they have more backlinks / text / keywords / etc.?

1. Interpretation of Correlation Calculations

Based on the existence and specification of factors examined across the top 30 positions, they calculate the “rank-correlation coefficient.” In that case, (here the definition from Wikipedia) by using the Spearman Correlation.

Whereby, these indicate the relationship between two variables. Namely the ranking on the one hand and the occurrence/existence of each factor on the other hand. And the differences in the search result positions compared to the value studied (i.e. the correlation) – can be detected.

As shown in the graph above, there is a curve of average values for each item. In the graph, four example correlations and the respective curves are shown.

Here are the example factors;

- A: Zero Correlation – linear curve, horizontal/high average

- B: Positive Correlation (highest) – exponential function, falling

- C: Negative Correlation (lowest) – linear curve, rising

- D: Positive Correlation – irregular curve, falling

The y-axis indicates the average value for all 10,000 URLs studied at position X (x-axis). Factors with the value “zero”, according to the analysis, point to no measurable correlation. Between good and bad Google site ranking factors on results.

Meaning, the higher the value of a correlation, the greater, and more regular, the differences between positions. Values in a negative range are best understood with the opposite statement positively interpreted. Simply put, the larger the differences from positions 1 to 30, the higher the correlation value.

So, to interpret the factors, average values are always used. For example, site ranking factors B and C from the above graph have the same correlation value (that is: 1). But they’re completely different regarding their respective curves.

For factor A, however, the average value is 95 (y-axis) for each position (x-axis), but could even be at 5 (y-axis). Although the correlation value would remain identical at 0. But, the interpretation of the factor would be completely different.

2. Site Ranking Factors through Search Engine Algorithms

Basically, a Search Engine works by using algorithms to evaluate websites by topic and relevance. In the end, this evaluation structure pages in the search engine index. Ultimately, resulting in user queries displaying the best possible ranking of the results display.

More often, the criteria for the evaluation of web pages and to produce this ranking are generally referred to as site ranking factors. And the reasons for this are straightforward! Simply, because of the exponentially rising number of documents on the Internet – and in the search index.

This, therefore, makes it impossible to rank these pages without an automatic algorithm, despite the existence of human ‘quality raters’. However, this algorithm is both mandatory (order, after all, requires a pattern), and, at the same time, the best-kept secret in the Internet business.

SERP | How do you Improve your Google Search Results?

This is true because, for all search engines, it is essential to keep the underlying factors that make up the algorithm strictly confidential. But, this inherent secrecy has less to do with competition between search engines than it has to do with more basic reasons.

After all, if the ways to obtain good rankings were widely known, they would become irrelevant as they would be constantly manipulated. No one but Google knows what the real Ranking Factors are. And that’s why jmexclusives too analyses data via rank correlation analysis. Producing the findings based on the properties of existing, organic search results.

And from these, we can comfortably conclude what the Ranking Factors, and their respective weightings, could be. With the immense database providing a reliable foundation for these analyses.

3. Database for Searchmetrics Site Ranking Factors

For instance, our analysis is based on search results for a very large keyword set of 10,000 search terms for Google in major cities. Where the starting pool is always the top 10,000 search terms by the search volume. But, from which specific navigation oriented keywords are extracted. In order not to distort the evaluations.

As navigation-oriented keyword searches are considered to be where all results but one are irrelevant to the searcher, more or less (e.g.: “Facebook Login”). Our database for the Ranking Factor analyses are always the first three organic search results pages.

Notwithstanding, the keyword sets from consecutive years coincide by more than 90 percent with the database from the previous year, as a rule. And here jmexclusives agency has sought a middle ground, to take two factors into account.

Namely the preservation of the “greatest common denominator” as the optimal basis for comparison with the previous study. While, on the other hand, taking into account “new keywords”, which have grown in search volume in the top 10,000.

In simple terms, the database at jmexclusives is always current. Therefore, new, relevant keywords are used for current analyses. Such as “Samsung Galaxy S5” or “iPhone 6”, which did not exist previously.

4. The Brand Reputation Presence & Awareness Online

One of the constants in the site ranking factor studies is an interesting peculiarity in the data that we have dubbed the “brand factor”, present in many factors and observations.

What it means by brand factor is the observation that websites from high profile brands or with a certain authority generally occupy the very top positions in the rankings. Even if they disregard particular factors that the URLs that rank slightly lower adhere to.

For example, on average, brands tend not to have an h1-tag on their page. Also, their content has a lower word count and the keyword is not found in the description of the meta-title as often. In a nutshell, from an SEO perspective, they are less optimized. On the other hand, brand websites typically feature many more backlinks and social signals than other URLs.

Google is already very efficient at identifying brands from particular sectors and at allocating their URLs a preferential ranking. Values like recognizability, user trust, and brand image are also reflected in the SERPs to a certain extent.

5. Black Hat SEO (Keyword Stuffing, Cloaking & Correlation)

At the beginning of the search engine age, Google considered pages relevant for specific topics. Where the subject-associated search terms (keywords) were frequently used. Site operators soon took advantage of this knowledge. Achieving very good positions in the SERPs by ‘stuffing’ pages with keywords.

While at the same time, enabling their often non-relevant pages to be found on well-ranking positions for the intended search terms. This generated not only real competition between search engines and SEOs but produced the myth of the ranking factor.

The goal of semantic search created a network of criteria that were initially strictly technical (e.g. the number of backlinks). But were added to by less technical components (e.g. user signals). This development, along with the pursuit of the optimum result, has culminated in the constant evolution of ranking factors.

The endless feedback loop of permanent-iterative update cycles is designed purely to generate search results that offer constant improvements to the individual searcher. The structure and complexity of ranking factors added to the strong influence of user signals are designed to produce the most relevant content for the user.

What are the Most Important SEO Ranking Factors?

When it comes to Search Engine Optimization (in short SEO), ranking refers to your content’s position on the Search Engine Results Pages (in short SERPs).

Having a #1 ranking means that when people search for a particular term, your web page is the first result. Apart from promoted results (featured snippets), and answer boxes.

Well-optimized sites get more and more traffic over time, and that means more leads and sales. Without SEO, searchers won’t be able to find your site, and all your hard work will be for nothing.

Many people wonder how Google rankings work, so to help you more on how search engine ranking factors work, please consider the following key elements.

- A Secure and Accessible Website

- Page Speed (Including Mobile Page Speed)

- Mobile Friendliness

- Domain Age, URL, and Authority

- Optimized Content

- Technical SEO

- User Experience (RankBrain)

- Links

- Social Signals

- Real Business Information

By all means, SEO requirements keep changing, and it can be hard to keep up with the latest developments. But if you want your site to get traffic, you have to be in the know.

Appearing in the top 3 results is excellent because almost half of the clicks on any search results page go to those positions. And, of course, appearing on the first page at all, within the top 10 results, is also useful. That’s because 95% of people never make it past the first page.

What Does Google Look for in SEO?

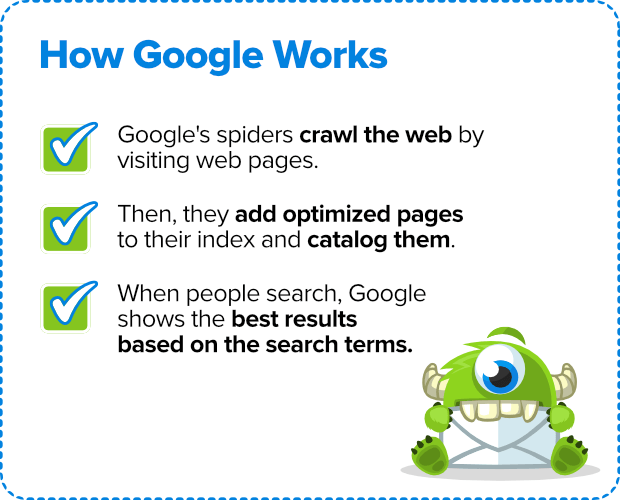

Google’s own stated purpose is to “organize the world’s information and make it universally accessible and useful”. Delivering relevant search results is a huge part of that. Below is a simple illustration of how they work:

First, Google’s search bots (pieces of automated software called “spiders”) crawl the web… All that really means is they visit web pages.

Second, they add correctly optimized and crawlable pages to Google’s index and catalog them.

Third, when people search Google, it shows what it thinks are the most appropriate results based on the search terms they enter (out of the trillions of pages in Google’s index).

At that point, you have to rely on your page titles and meta descriptions to get searchers to click your link and visit your site.

How do you Monitor Search Engine Rankings?

Before you can improve your SEO ranking, you’ll need to know your starting point. There are a couple of ways to find this.

First of all, you could search Google using the terms you think your customers will be using. Use an incognito or private window in your browser, so the results aren’t skewed by Google’s personalization. And then see where your content appears.

However, that’s totally impractical for established sites with hundreds of pages, so you’ll need a tool to do it for you. For example, with SEMRush, you can type your domain into the search box. Then thereafter, wait for the report to run, and see the top organic keywords you are ranking for.

Or rather, use their keyword position tracking tool to track the exact keywords you’re trying to rank for.

What does Google-friendly Sites Entail?

Before I conclude, it’s fair to say that even Google itself doesn’t know how its own algorithm is made up. Keeping in mind, so complex have the evaluation metrics become.

The objective of the “Ranking Factors” studies is not to produce a gospel of absolute truth. Instead, we consider these studies to be a methodological analysis from an interpretative perspective.

What this means is that we aim to provide the online industry with easy access to a data toolbox. By using this toolbox, the industry can make informed decisions. Based on our intensive research across a wide spectrum of criteria.

From a business perspective, long-term success can be achieved by using a sustainable business strategy. Based on incorporating relevant quality factors to maintain strong search positions. This approach means a disregard of negative influence options. And a clear focus on relevant content, at the same time combating spam and short-termism.

If you’ll need more support on this, please Contact Us and let us know how we can help you.