According to a certain perficient blog, one of the questions you see swirling about the forums and blogs these days is whether or not you should Noindex RSS Feeds or not. Perse, to avoid duplicate content problems. The source of the problem is that RSS Feed is being crawled by search engines. In addition, many people are now recommending something quite significant.

That you include the entire content of your articles directly in your feed. You can read more about this in this recent Rick Klau interview in regard to the FeedBurner to see a few insights. That said, so, if the search engine sees the content on your website, and also sees it in your feed, will that be seen as duplicate content? There certainly have been some instances, yes!

And, that’s of finding RSS feeds in the search results of search engines. For those of you worried about this possibility, you can see the basic Yahoo’s spec for Noindexing RSS feeds here which has more details. Our understanding is that both Google and Yahoo! will honor this Noindex request for your feed. But, let’s step back, and think about these search engines today.

The likes of Google, Bing, Yahoo, Edge, Yandex, and the like — how they try to deal with duplicate content. They are always trying to figure out who the authoritative source of the content is. It should be pretty obvious to the search engine when it’s crawling an RSS Feed. Also, it should be relatively obvious that the RSS feed for a site’s content is not an authoritative search.

Should You Noindex RSS Feeds For Your Blogs Or Not?

Well, this remains a two-sided question with two possible answers. And, by the end of this blog, we’ll try to gather a few best possible answers. Some think there’s no point in Noindex RSS Feeds as it does not help in ranking your website better. Using the noindex meta-tag for such feeds may have made sense in the past, but today it is not necessary to do so.

As they are simple to recognize by Google and other contemporary search engines, they take action by excluding them from web search results. Also, if you noindex your RSS feeds, you are possibly inviting spammers to your site. Crawling feeds are simple as pie; even a child could program a crawler that can scrape the feed from your content in no time.

On the other side, by having the abstracts of your latest publications indexed by the likes of JournalTOCs, search engines, aggregators, or any web service, you’ll ensure that hundreds of thousands of potential readers can discover your content. Thus, you should make sure you ARE NOT using the noindex meta-tag.

The noindex meta-tag may help in Search Engine Optimization (SEO) but it should be used wisely, rather than simply assuming that it’s always a good idea to use it. But, others still suggest that noindex RSS feeds are bad because they could be considered thin content. And, therefore, so many RSS feeds (thin content) could lower the overall domain quality rating.

Learn More: What Is An RSS Feed? Is It Still Relevant For Sites Today?

In addition, providing a website RSS Feed often helps a search engine more rapidly find new content on your website. In fact, Adam Lasnik commented that during his time at Google that he had not heard of any instances of a website being negatively affected by a duplicate content issue with an RSS feed.

Based on this input, we would say that there is extremely little risk in letting your feed get indexed. After we first learned about the issue, we did move forward and Noindex our feeds. But then, after conversations with a few Google insiders, we became convinced that it’s just not a problem, and this is the recommendation we make to our clients as well.

It should be pointed out that robots.txt directives function differently than those of the meta noindex variety. As far as we know, disallow rules specified via robots.txt forbid compliant search engines from accessing matching resources entirely. On the other hand, meta noindex rules do not prevent search engines from accessing and crawling the page.

Overall, this enables search engines to follow links contained within noindex content meta tags. A subtle distinction, perhaps, but important nonetheless.

Deciding Whether To Noindex RSS Feeds Or Not

In our case, we can simply say that Feed URLs are not created for humans, but for RSS feed crawlers and readers. They are only basic code versions of your actual content pages. You do not want these pages to be indexed as Google doesn’t like them and would most not likely not show them to users anyways. Thus, this is best practice and not an issue.

Moving on, the best thing to do is to investigate whether the X-Robots-Tag: noindex is really needed for RSS feeds. And, more so, whether Google is smart enough to exclude RSS feeds from the search results when the X-Robots-Tag: noindex is not present in the feed. In the case of the X-Robots-Tag: noindex is still needed, provide a way (possibly a filter?).

Especially, to exclude the noindex tag from RSS feeds with podcasts. With that in mind, when it comes to controlling link equity and indexing content, we have three primary tools, each of which serves a different function.

Consider the following tools:

- Robots.txt directives prevent compliant search engines from accessing specified resources. This is useful for admin pages and other directories that do not need to be included in the search listings.

- Meta tags such as

noindexandnoarchiveassume search-engine access and enable spiders to crawl the pages and follow links. Link equity will also be passed through such pages. - Nofollow tags as applied directly to links allow search engine access but forbid the passing of link equity to the target pages. This method is useful for controlling directly the flow of link juice throughout a site.

Depending on your SEO goals, manipulating the ebb and flow of link juice is greatly facilitated by the functional variety provided by these three techniques. Be that as it may, to make sure that your feeds are not indexed by Google and other compliant search engines, add the following code to the channel element of your XML-based (RSS, etc.) feeds:

<xhtml:meta xmlns:xhtml="http://www.w3.org/1999/xhtml" name="robots" content="noindex" />

The good news for a beginner webmaster is that you can also tweak these settings using your SEO plugin as we are going to elaborate further in the next section. Plus a few tips to manage your canonical URLs and pagination.

Managing Your Canonical URLs, Noindex RSS Feeds & Pagination

According to the AIOSEO team, Canonical URLs are an essential part of SEO and are especially important with WordPress. Whilst Canonical URLs are a feature built into WordPress, a plugin tool such as the All In One SEO (AIOSEO) Pack can help you manage them. They make sure that search engines don’t get confused when different URLs point to the same content.

For example, in WordPress, you can have multiple ways to reach content. You may have a post about “the difference between design and development” which you’ve placed in two categories, resulting in two URLs to the same blog.

See an example illustration below:

As you can see, both URLs point to the same post, a canonical URL that would tell search engines like Google, Yahoo, Bing, and the like which is the preferred URL for your blog post — so that they don’t think you have duplicate content on your website. Of course, yes, WordPress does a great job of outputting the canonical URL for your content.

Meaning, that a plugin tool such as the Yoast SEO Plugin or even the All in One SEO is just a complimentary supplement for WordPress — by adding some advanced features as follows:

Creating Custom Canonical URLs

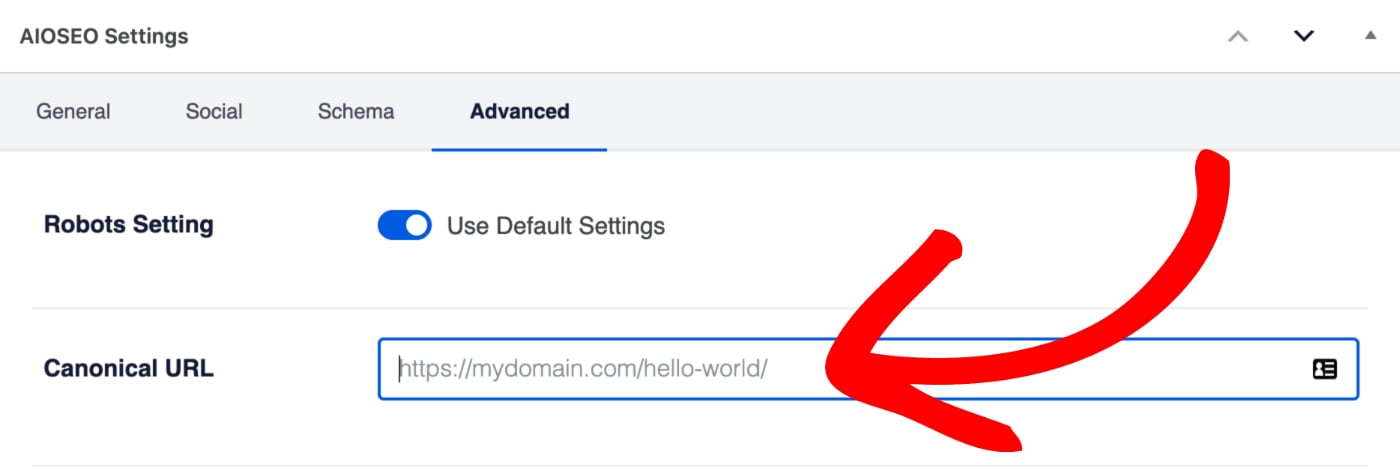

Basically, the All in One SEO Plugin lets you specify your own canonical URL for any item of content. You can find this setting by editing your content and scrolling down to the AIOSEO Settings section and clicking on the Advanced tab.

Enter the full URL you want to set for the Canonical URL in the field.

No Pagination For Canonical URLs

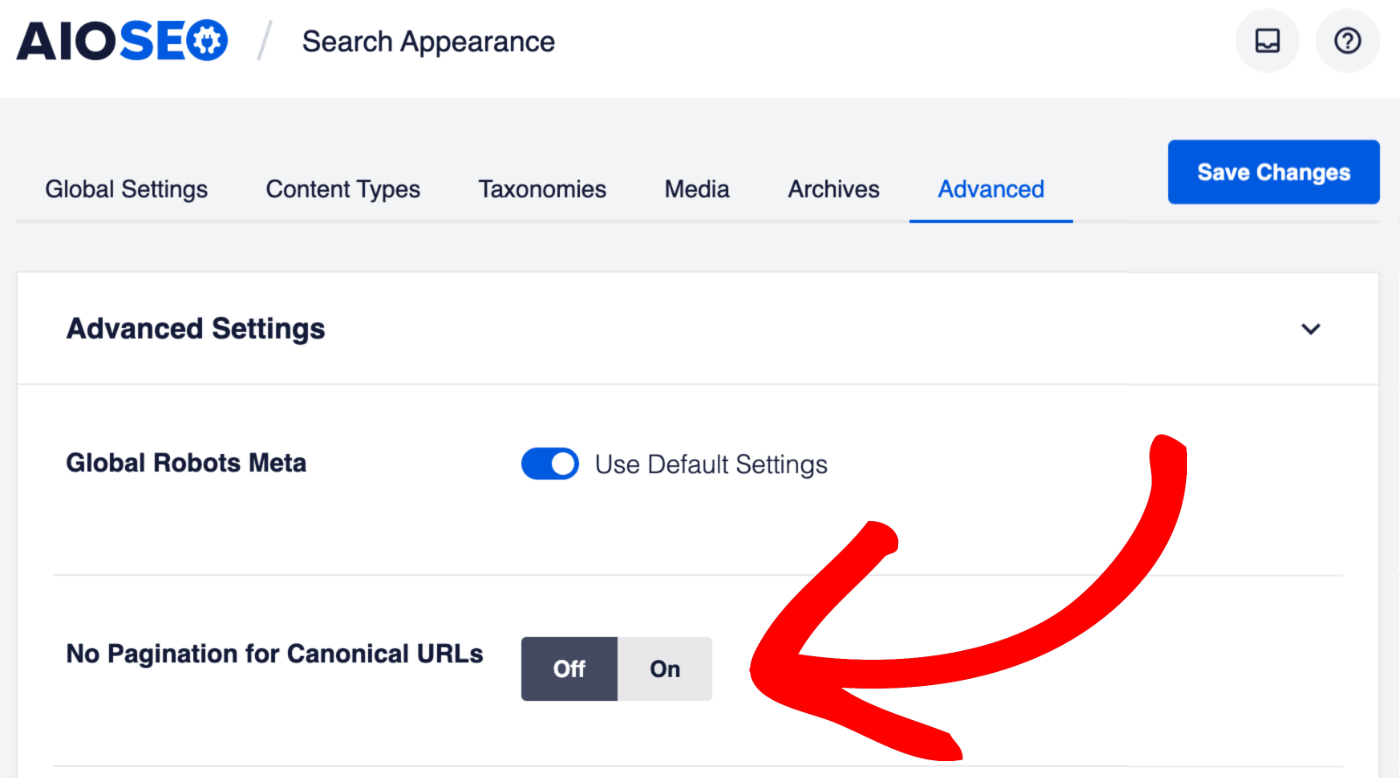

Equally important, the All in One SEO Plugin also lets you remove the page numbers from the Canonical URLs of paginated content. This means, that you can point paginated content to the first page.

For example, page 2 of the Hello World post would normally have the URL but All in One SEO would set the Canonical URL to . You can find this setting by clicking on Search Appearance in the All in One SEO menu and then clicking on the Advanced tab.

Change the toggle to Yes to remove page numbers from the Canonical URLs of paginated content. Be that as it may, you can watch out the tutorial video on Optimizing Pages with Pagination below:

Note that the static posts page feed allows users to subscribe to any new content added to your blog page. While the authors feed allows your users to subscribe to any new content written by a specific author. Also, the post comments feed allows your users to subscribe to any new comments on a specific page or post.

You may also consider the likes of the Atom Feed, RDF/RSS 1.0 Feed, Search Feed, Attachments Feed, Paginated RSS Feeds, Post Type Archive Feeds, Taxonomy Feeds, and much more…

A few other important RSS Feed notes for webmasters:

On one hand, the Global RSS Feed is how users subscribe to any new content that has been created on your website. Disabling the global RSS feed is NOT recommended. This will prevent users from subscribing to your content and can hurt your SEO rankings. On the other hand, the global comments feed allows users to subscribe to any newly added comments.

Forthwith, there are also the Crawl Cleanup Options too. Whereby, removing unrecognized query arguments from URLs and disabling unnecessary RSS feeds can help save search engine crawl quota and speed up content indexing for larger sites. If you choose to disable any feeds, those feed links will automatically redirect to your homepage or applicable archive page.

Always remember, that there is also the Remove Query Args Option that will equally help prevent search engines from crawling every variation of your pages with all the unrecognized query arguments. Only enable this if you understand exactly what it does as it can have a significant impact on your website.

What The Pagination And RSS Feed Indexation Future Holds

Publishers should, in reality, very much want their RSS feeds to be indexed because it can help aggregators and search engines to direct users to where the newest content. Search engines are smart enough to understand the difference between a feed and a webpage, and use the feed as a pointer to the webpage where the real source of the content resides.

Allowing search engines to index RSS feeds is therefore an important way to drive traffic to the webpages of the actual content. There is no scenario in which a publisher is not interested in having their latest content indexed. Old feed generators, such as the deprecated FeedBurner, still provide users with the outdated option to noindex feeds.

Precisely, in order to prevent them from being penalized by search engines. As such, moving forward, content publishers need to be reassured that it is no longer an issue, and indexed feeds do not create penalty situations. Google itself will normally not show RSS feeds in search results. The noindex meta-tag is not good for publishers.

Any publisher who wants to enable RSS readers, aggregators, and APIs to reuse details of their content should make sure to remove the noindex meta-tag from their RSS pages and from their software that generates RSS feeds.

The noindex meta-tag to be removed looks like this:

<meta name=”robots” content=”noindex“>

This code tells search engines and aggregators that they should not index or crawl the content of the RSS feeds. Eventually, the Custom Robots.txt File may evolve into a full-fledged, highly flexible protocol that will replace:

noindex

noarchive

nofollow,

disallow

and other crawl-related directives with their own specifically developed language. In layman’s language, they may take the core route of some kind of like elementary features that we see CSS for spiders offering us today.

Summary Thoughts:

Usually, noindex markup is placed by the webmaster in the website, and not Google Search Console as such. It is often customary to have noindex on both /tag/ and /feed URLs since they rarely will contribute to search traffic. Through its search console, Google is just letting you know it is there, not that it is necessarily a problem to call for an alarm.

Simply put, noindex should only be used for web pages you don’t want showing up in search results or want to hide from the external world. For example, a test page, archive page, or something similar. In addition to anything else that is not relevant to the publisher’s business; they all should have the noindex tag.

So that they don’t end up taking the place of the real important pages in search results. Not to mention, Google’s algorithm tends to avoid placing multiple links from the same domain on the front page (unless the website has a good ranking). Even before we came to a full realization, we had to do a few tests and tweaks here and there ourselves, so take your time!

Other Related Resource References:

- Setting The SEO Options For Your Home Page

- How To Create an XML Sitemap In Simple Steps

- How To Use Crawl Cleanup To Increase Search Engine Crawl Quota

- How To Fix The Noindex On Rel Next/Prev Paginated URL Issue

- SEO-Friendly Pagination: A Complete Best Practices Guide

- Running Shortcodes In The All In One SEO Plugin

That’s it! Everything that you needed to know about Pagination, how it impacts SEO and RSS Feeds indexing features. We hope that this guide will help you in your next SEO audit journey for your website. So that you can improve its crawlability, indexation, searchability, and even the overall high ranking in the SERPs (Search Engine Result Pages) as well.